Introduction

Pulsar consist of a Python server application that accepts jobs from a Galaxy server, submitting them to a local resource and then sends the results back to the originating Galaxy server once processed.

The Open Infrastructure is a set of tools to have ready-to-go Pulsar endpoints easily deployable by Cloud infrastructure providers, allowing to deploy new Pulsar nodes to further extend the computing capacity of the Network, and the Galaxy servers connected to it.

The Open Infrastructure

The Open infrastructure encompasses:

A virtual machine image, named Virtual Galaxy Compute Nodes, providing everything is needed to run Galaxy jobs.

Containerized tools and Reference Data shared through read only repositories based on CERN-VM FileSystem.

Terraform for configuring Cloud infrastructures, including compute, network and storage services.

Ansible automation engine to perform Pulsar and/or Galaxy configuration and update routines.

This framework exploits HashiCorp Terraform to perform the installation and configuration of a Pulsar Endpoint on OpenStack using a Virtual imaged named VGCN (see Requirements for more details). The Terraform script needs to access an OpenStack cloud via API to:

upload a VM image of 4GB (preferred, but we support also a preloading via the Dashboard interface);

access an ipv4 external network (means a network that can reach internet);

the external network has a DHCP server enabled that can provide 1 public IP;

create an ipv4 private network for the VMs (preferred, but we support also to use a pre-existent network of this kind);

create a router to bridge the private network to the external network (optional, if this feature is provided by the Cloud network infrastructure);

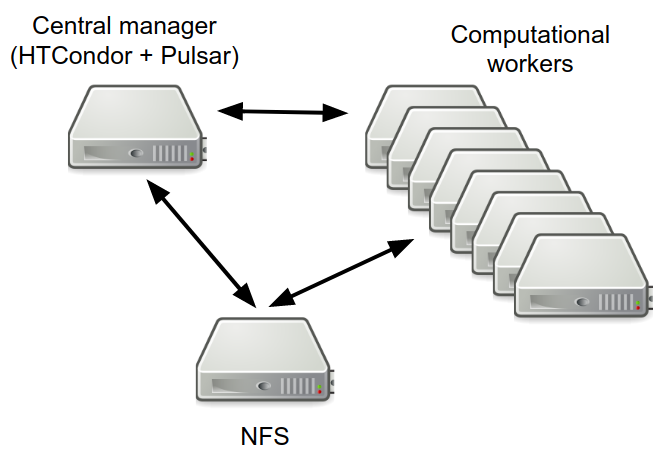

create one

central managerwhere all services are installed;create one NFS server;

create N worker nodes;

attach a storage volume to the NFS server;

create three secgroups;

upload an ssh public key to access the Central Manager VM.

Minimal setup

A minimal setup requires:

Central manager and NFS server nodes each with 4 cores, 8 GB

Computational workers each with 4-8 cores, 16 GB

300+ GB volume

but the more the better.

Architecture

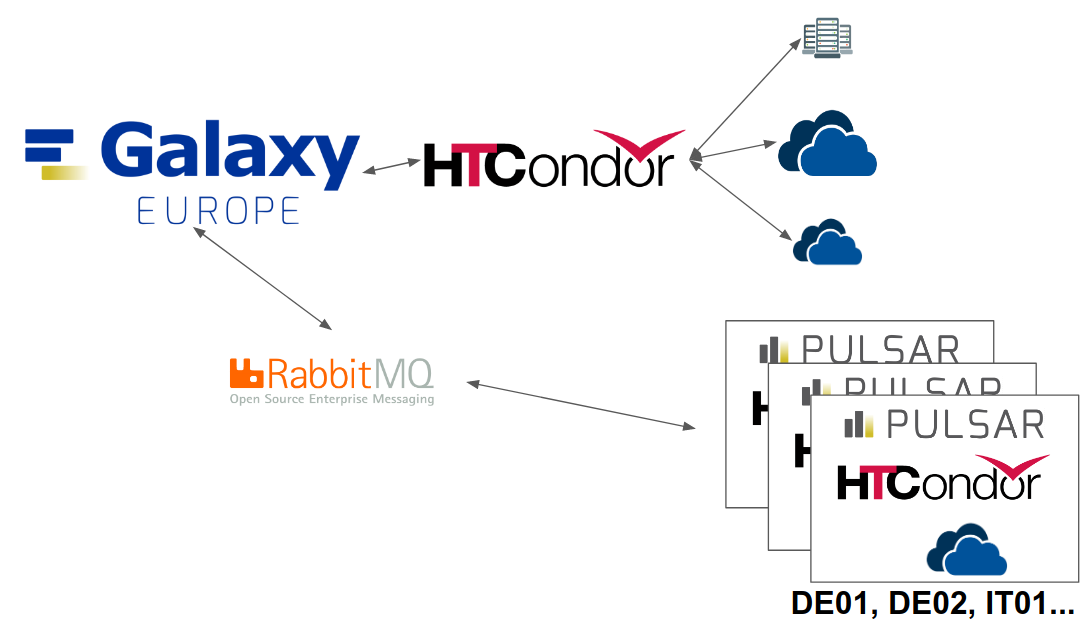

Usegalaxy.eu and the remote Pulsar endpoints communicate through a RabbitMQ queue.

The interesting aspect of this setup is that the Pulsar endpoints don’t need to expose any network ports to the external, because:

the Galaxy and the Pulsar server exchange messages through RabbitMQ.

the Pulsar server starts all the staging actions, reaching the Galaxy server through its API.

Dependencies

All tools dependencies are resolved by UseGalaxy.eu through a container mechanism resolver providing a proper Apptainer (formerly Singularity) image for each tool. Singularity images are made available locally through a distribution system enabled by a CVMFS servers network.